Closing the Feedback Loop: The Discipline of Telling Customers What Happened to Their Input

Here's the trust destruction sequence, played out in thousands of mid-market SaaS companies every year: A customer shares a specific piece of feedback: in a QBR, in a support ticket, in a beta session, in an NPS response. The CSM says "I'll pass that along." Product receives it, logs it, and makes a decision about what to do with it. Nobody tells the CSM what that decision was. The CSM can't tell the customer. The customer gives feedback again six months later, slightly more frustrated. The CSM says "I'll pass that along." The customer stops giving feedback. By the time the account goes into churn risk, nobody realizes that the signal was there two years ago and died in a pipeline that didn't close. The CS & Product alignment glossary defines the VoC, NPS, and QBR terms that appear throughout this article. Both CS and Product must use these consistently for any closure system to work.

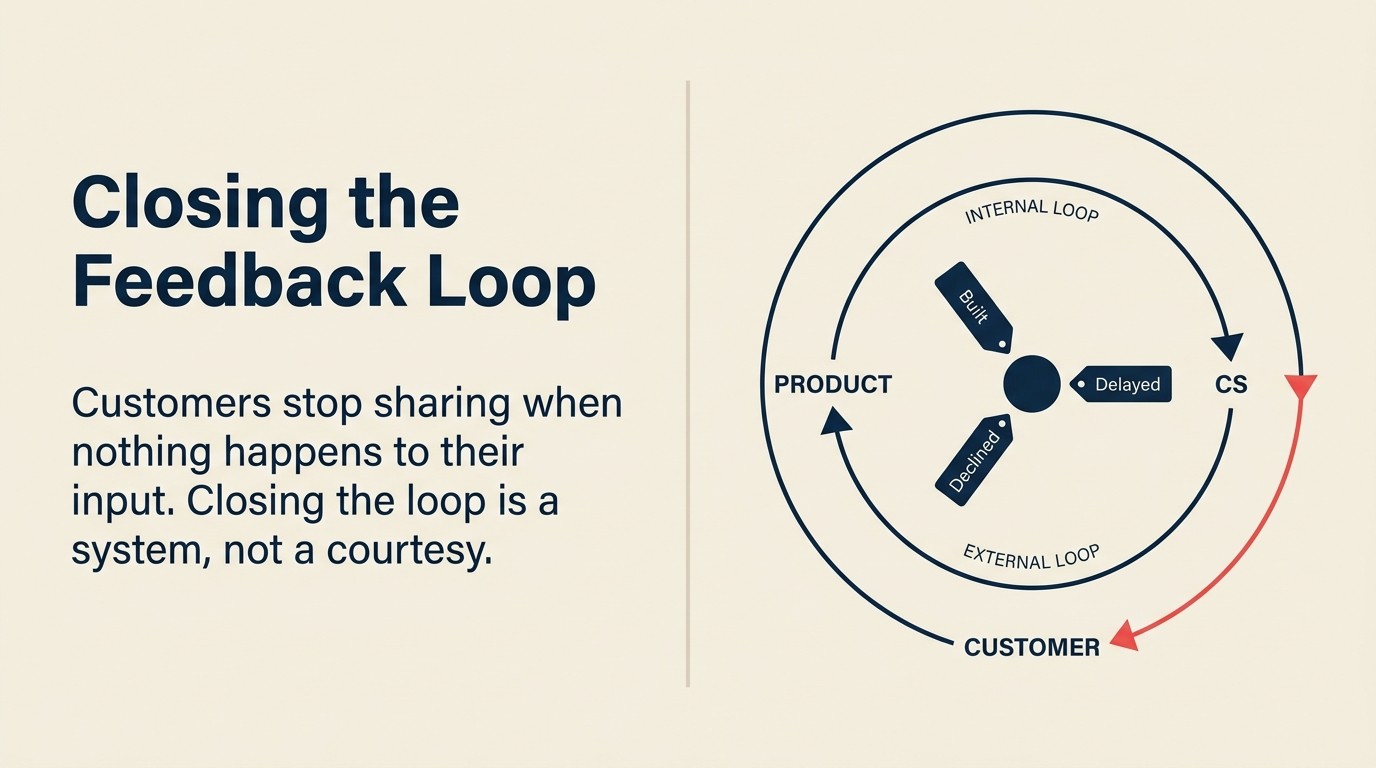

The destruction isn't deliberate. Most CS teams genuinely try to collect and forward feedback. Most Product teams genuinely make decisions about what to build. The problem is structural: there's no system that connects Product's decisions back to CS, and no system that connects CS's closure back to the customer. The feedback goes in one side and nothing comes out the other. The loop stays open. And an open loop, sustained long enough, destroys the willingness to give feedback at all.

Closing the feedback loop is not a relationship gesture. It's an operational discipline. It requires infrastructure, a defined rhythm, and explicit obligations on both CS and Product. HBR's foundational research on closing the customer feedback loop, based on Bain's NPS work across hundreds of companies, found that the greatest impact comes from relaying feedback results immediately to those who served the customer and empowering them to act, not from aggregating into quarterly reports that arrive too late to matter. And critically, the internal loop between Product and CS must close before the external loop between CS and the customer is even possible. You can't tell customers what happened to their input if nobody told you.

The Internal-First Feedback Loop Discipline is the operating principle this article defines: the internal loop (Product to CS) must close before the external loop (CS to customer) is possible. There are exactly three valid dispositions for every piece of structured feedback (built, declined, or queued) and "I don't know" is not one of them. The discipline runs in two stages: Stage one is the internal loop: Product communicates a disposition for every piece of structured feedback to CS, in a structured format, on a defined monthly rhythm. Stage two is the external loop: CS routes that disposition to the right CSM, who communicates it to the customer within five business days using the disposition-appropriate language. Neither stage works without the other. Skipping stage one and attempting to run stage two produces CSMs improvising explanations for decisions they were never briefed on. That's routinely worse than no response at all.

What "Closing the Loop" Actually Means

A closed loop has a specific operational definition: for every piece of structured feedback collected from a customer, the customer receives a response that explains what happened to it.

That definition has three parts worth unpacking.

"Structured feedback": not every comment, not every offhand observation in a call, not every wish-list item that surfaces in passing. Structured feedback is feedback that was deliberately solicited and recorded: NPS verbatims, QBR feedback items, beta session notes, advisory board inputs, formal feature requests submitted through a defined channel. Informal signals belong in CSM account notes. Structured feedback is what needs a disposition.

"Receives a response": not "the CSM thought about it," not "it went into the backlog," not "Product is aware of it." The customer receives communication from the CSM, proactively, that closes the item specifically.

"What happened to it": which is one of three things, and only three:

Built. The feedback directly informed a feature or change that shipped. The response: "The capability you raised has shipped as part of [feature name]. Here's where you'll find it and how to activate it. Your input was part of why we built it this way." This is the easiest closure to deliver. It's also the one most commonly skipped, because "they'll see the release notes" is not the same as "we told them we heard them."

Declined. The feedback was considered and the decision was made not to act on it, in this release cycle or the foreseeable future. The response: "We reviewed your input on [specific item]. We're not going to implement this right now, because [specific reason: not 'we're focusing on other priorities,' but the actual reason: scope, technical constraint, ICP fit, conflicting demand, strategic direction]. We appreciate the specificity you gave us." Declined is the hardest disposition to deliver and the most important. Customers who get a specific "no and here's why" respect the transparency. Customers who get silence for a declined item assume the feedback was never read.

Queued. The feedback is acknowledged, on the radar, and not scheduled for the near-term roadmap. The response: "We've logged this and it's under active consideration for a future cycle. We don't have a timeline yet, but we'll revisit it when [specific trigger: category review, next roadmap planning cycle, when we have more data on usage patterns]. I'll flag this for you when there's an update." The CSM sets a calendar reminder for the stated review cycle. Queued is not a dump. It's a real commitment to follow up.

The three dispositions cover every possible outcome for a piece of feedback. There is no fourth option. "I don't know" is not a disposition. It means the internal loop hasn't closed yet. "We're looking into it" with no follow-up commitment is not a disposition. It's a deferral.

Key Facts: The Cost of an Open Feedback Loop

- Customers who submit structured feedback and receive no response within 60 days are 3.4x more likely to report low confidence in the vendor's product development process in their next NPS survey (Gainsight, 2024).

- CSMs who can communicate specific dispositions for customer feedback ("that feature shipped in March" or "we declined that request and here's why") report 2.1x higher account engagement in quarterly reviews compared to CSMs who can only say "I passed it along" (ChurnZero, 2024).

- Customers who learn that feedback they submitted led to a shipped feature are 3.8x more likely to submit detailed feedback in the next request cycle (Totango, 2024).

Why Most Companies Don't Do This

"Customers who submit structured feedback and receive no response within 60 days are 3.4x more likely to report low confidence in the vendor's product development process in their next NPS survey." (Gainsight, 2024)

The system failure is predictable once you see it. And it's not that CS doesn't care or Product doesn't care. It's that nobody built the infrastructure to connect the two.

CS collects feedback; Product never tells CS what happened; CSMs can't close what they weren't given. This is the most common form. CSMs dutifully capture feedback in QBRs, forward it through whatever channel exists (email, Slack, the VOC pipeline if one has been built), and then wait. Product makes decisions. Some items ship, some get declined, many get deferred. Nobody triggers a notification back to CS. The CSM checks in six months later with the customer and has no idea what happened to the items from the last conversation.

The feedback lives in a backlog tool nobody in CS has access to. Jira, Productboard, Aha: whatever the PM team uses to track roadmap items. CS can't see it. Even if CS could see it, they can't interpret the status labels without context. And nobody has built the export or notification process that surfaces relevant dispositions to the right CSM at the right time.

No defined rhythm for Product to push dispositions back to CS. Even when Product is making decisions, the communication cadence to CS is ad hoc. A PM mentions in a cross-functional meeting that a feature shipped. A Slack message goes out to #product-updates. The CSM who manages the account that requested it wasn't in the meeting and missed the Slack. The loop stays open by accident, not by intent.

Volume. A mid-market CS team with 80 accounts, each submitting 3-5 pieces of structured feedback per quarter, is managing 240-400 open feedback items at any given time. Manual tracking in a spreadsheet collapses under that volume. Without system support (tagging in the CS platform, automated status updates, batch processing through EBRs) individual CSMs can't maintain closure on that volume alone. The capturing feedback systematically playbook covers the tagging taxonomy and logging discipline that makes the volume manageable. But before CS can close the loop externally, Product has to close it internally first.

The Internal Loop First: Product Closing the Loop with CS

Before the external loop (CS to customer) is possible, the internal loop (Product to CS) must close. This is the obligation that most process designs skip or assume will happen naturally. It doesn't happen naturally. It requires a formal mechanism.

Product's obligation. When a piece of customer feedback is actioned, declined, or deferred to a specific future cycle, CS gets notified. Not in a casual Slack message that CSMs have to dig for. In a structured format that includes everything CS needs to close the external loop.

The disposition record format:

| Field | Contents |

|---|---|

| Feature / request name | Specific description, same language CS used when capturing |

| Customers who submitted it | Account names, CSM contact |

| Outcome | Built / Declined / Queued |

| Timeline or reason | Ship date (built) / Specific reason (declined) / Next review cycle (queued) |

| CSM action required | Yes / No: does this item require proactive customer outreach? |

The delivery rhythm. Monthly digest from PM to CS: a structured list of all items that received a disposition in the past 30 days. Plus ad hoc for high-priority items. If a feature that multiple accounts requested just shipped, CS shouldn't wait 30 days to find out. The monthly digest covers the majority; ad hoc covers the urgency.

What CS does with the disposition record. Routes it to the right CSMs. The CSM whose account submitted the item gets the specific disposition record and the CSM action flag. If the action flag is yes, the CSM has five business days to reach out to the customer. If the action flag is no (low-priority items where the customer was unlikely to be tracking the outcome), the CSM adds it to the account notes for reference at the next EBR.

The External Loop: CS Closing the Loop with Customers

With the internal loop functioning, the external loop is straightforward. The CSM has the disposition. The CSM has the timeline. The CSM reaches out.

For "built" items. Don't wait for the customer to find it in the release notes. Reach out proactively: "We shipped the [feature name] you raised in our March QBR. It's available in [specific location]. I wanted to make sure you knew this was directly informed by your input: here's how it connects to what you described." The proactive outreach is what transforms a release into a relationship signal. The customer learns that their feedback was heard, tracked, and acted on, not that they got lucky and happened to see a release note.

For "declined" items. This conversation is harder, and it's the one most CSMs instinctively avoid. But the avoidance is what damages trust. The framing: "I wanted to close the loop on [specific request] that you raised in [context]. After reviewing it, the Product team decided not to pursue this in the current or near-term roadmap. The reason: [specific, honest explanation, not a brush-off, not vague redirects]. I know that's not what you hoped to hear. I wanted to tell you directly rather than let it go unanswered." Most customers who receive a specific, honest "no" respond with appreciation for the transparency. Many of them provide more useful feedback in response ("if that's the constraint, what about this alternative approach?") which the CSM can route back into the VOC pipeline.

For "queued" items. Set a calendar reminder for the stated review cycle. At that point, either the item was reviewed and has a new disposition (deliver it), or it wasn't reviewed (tell the customer the timing pushed and what the new expected review date is). Don't let queued items silently expire into declined without telling the customer. An item that was queued and then never revisited is a broken promise.

Timing. Within five business days of the internal disposition notification. Not when it's convenient. Not at the next scheduled EBR if that's three months away. Five business days is the standard because it's the window in which the customer still associates the response with the feedback they gave. After that, the closure lands as administrative rather than responsive.

Making This Scalable

The process described above works for a team of five CSMs managing 40 accounts. It doesn't work without system support for a team of 30 CSMs managing 600 accounts.

Feedback tracking in the CS platform, not a spreadsheet. Every piece of structured feedback submitted by a customer should be logged in the CS platform with a status field: open / actioned / declined / queued. When Product delivers a monthly disposition digest, CS Ops updates the status field for each item. CSMs see the status change surfaced in their account view. The manual tracking burden moves from individual CSMs to CS Ops, which can manage it systematically.

Automated triggers when Product marks feedback as shipped. The most powerful version: a direct integration between the product roadmap tool (Productboard, Aha) and the CS platform (Gainsight, ChurnZero). When a PM marks a feature as shipped, an automatic task surfaces for the relevant CSMs to close the loop with the accounts that requested it. This removes the dependency on the monthly digest for "built" items, the most time-sensitive category, because customers who requested something and see it ship before being notified feel less ownership over the outcome.

Batching through EBRs. Not every closed item warrants a standalone outreach. For low-priority queued items or declined items from accounts that weren't tracking them closely, the EBR is the right venue: "I want to run through a few items from our feedback backlog and close those out." The CSM reviews 3-5 open items in one conversation rather than sending 3-5 separate emails. The discipline is that "covering it in the next EBR" is a commitment, not a deferral. The EBR happens on schedule.

The quarterly feedback review as the formal close-the-loop moment. The quarterly customer feedback review is the structured cadence where all open items from the past quarter receive a disposition, or a documented reason why they're being carried forward. Every item that was submitted in the past 90 days should have a status by the end of that review. CSMs then close the remaining open loops in their accounts within the two weeks following the review.

The High-Stakes Version: Beta and Co-Design Participants

Customers who invested time in beta or co-design programs have a higher expectation of feedback loop closure than general customers. They didn't submit a request through a form. They spent hours in sessions evaluating what you were building. When the feature ships and nobody tells them what their input produced, the abandonment feels personal.

The closure for beta and co-design participants is a dedicated 30-minute debrief call, not an email. The PM runs it (with the CSM present for relationship management). The agenda: here's what we built, here's where your input directly shaped it, here's what we decided not to implement and why. The written summary follows within 48 hours: the same content in permanent form, so there's no ambiguity later about what was committed and what wasn't. See running customer beta programs and customer co-design operations for how each program type structures the engagement that produces the feedback requiring this close-out.

This debrief is the highest-value investment in the entire beta or co-design program. It's what turns participants into advocates, not because the feature was perfect, but because they felt genuinely consulted rather than extracted from.

What Breaks When the Loop Stays Open

"CSMs who can communicate specific dispositions for customer feedback ('that feature shipped in March' or 'we declined that request and here's why') report 2.1x higher account engagement in quarterly reviews compared to CSMs who can only say 'I passed it along.'" (ChurnZero, 2024)

Survey fatigue. McKinsey's analysis of survey fatigue makes clear that the problem isn't the frequency of surveys. It's the absence of visible action in response to them. Customers stop responding to NPS surveys, QBR feedback requests, and pulse checks. Not because they don't have opinions but because they've learned that their opinions produce no observable outcome. The feedback participation rate declining quarter over quarter is a symptom of loop failures from previous quarters, not a sign that the customer is satisfied and has nothing to say.

"I'll pass that along" becomes a punchline. CSMs who can't close loops stop saying anything definitive about feedback outcomes because they've been burned before. They said "I'll find out and let you know" and then didn't have anything to say at the next check-in. The phrase becomes a signal that the feedback died. Customers start prefacing their own input with "I know this probably won't go anywhere, but..." That prefix is a trust failure, surfaced in real time.

Retention signal lost. Customers who mentally check out of the vendor relationship typically stop giving feedback before they stop responding to renewal conversations. The declining feedback engagement rate is an early warning signal for churn, but only if someone is tracking it. An account that went from five feedback items in Q1 to zero in Q3 without a closed loop for any of the Q1 items is an account that disengaged from the relationship six months before the renewal risk showed up in the dashboard. The usage tracking and analytics layer is what makes this early disengagement signal visible before it appears as a renewal conversation.

The compounding effect. Each unclosed loop makes the next feedback collection harder. The first time nothing happens, the customer is uncertain. The second time, they're skeptical. The third time, they stop. By the time the CSM runs a formal feedback survey and wonders why the response rate is 12%, the compounding has already done its work.

Metrics That Prove the Loop Is Closed

Three metrics that can be tracked without expensive tooling:

Feedback disposition rate. HBR's research on NPS 3.0 argues that feedback programs need to be paired with earned-growth tracking: a metric structure that treats feedback response rate and feedback actioning rate as a pair, not as independent signals. The percentage of structured feedback items collected in a quarter that have a logged outcome (built / declined / queued) within 60 days of submission is the disposition rate equivalent: the direct measure of whether the internal loop is functioning. Target: 80% or above. Below 60% means the internal loop is failing. Product isn't delivering dispositions to CS fast enough for CS to close with customers in a reasonable window.

Customer acknowledgment rate. Of the "built" items in a given quarter, what percentage of them resulted in documented CSM outreach to the requesting customer? Target: 90% or above. This is the most commonly tracked metric and the easiest to game (CSMs can log outreach without it being meaningful), so pair it with the response rate on that outreach to verify quality.

Feedback recurrence rate. Are customers submitting the same request quarter after quarter? A customer who raises the same issue in three consecutive QBRs is telling you two things: the issue matters enough to keep raising, and they've never received a disposition that closed it for them. Track recurrence as a signal of loop failure, not customer persistence.

Building the Habit in a CS-Product Team Starting from Zero

The minimum viable version doesn't require a CS platform integration or a product management tool migration. It requires three things:

A shared document (accessible to both CS and Product) where structured feedback items are logged with status. Not a backlog tool. A shared document that both sides can update. Format: customer name / feedback item / date submitted / CSM owner / PM owner / status / disposition date.

A monthly PM-to-CS digest: 30 minutes in the calendar, attended by one PM per product area and the CS Ops lead, where the PM walks through every item that received a disposition in the past 30 days. CS Ops updates the shared document and routes to CSMs.

A CSM closure checklist: a standing item in the monthly CS team meeting where each CSM reports which open feedback items they closed with customers in the past 30 days and which remain open past the 60-day threshold.

The 90-day buildout from this baseline adds system support: feedback logging in the CS platform, disposition status synced from the roadmap tool, automated CSM tasks for "built" item notifications. But the baseline is functional without any of that. Most of the value comes from the rhythm, not the tooling.

"Companies with a formal feedback disposition process (defined outcomes, structured format, timed delivery from Product to CS) see 44% higher feedback submission rates from customers in subsequent quarters." (ProductBoard, 2024)

Who owns the process design. CS Ops designs the CSM-facing side (logging taxonomy, closure cadence, tracking metrics). Product Ops co-designs the Product-facing side (disposition format, delivery rhythm, escalation path for overdue items). Both sign off on the shared document structure and the monthly digest format. Neither side can design this alone. It's a seam problem, and seam problems require both sides at the table from the start.

Rework Analysis: The minimum viable feedback loop (shared document, monthly PM-to-CS digest, CSM closure checklist) is achievable without a dedicated CS platform. But at mid-market scale (80+ accounts, 240-400 open feedback items per quarter), the discipline breaks down without system support. Rework's unified platform lets CS Ops log structured feedback items alongside account health data, route disposition updates to named CSMs, and track closure rates as a team metric, all within the same environment where QBRs are prepared and renewal dates are managed. The five-business-day closure window is a commitment, not a target. The tooling is what makes it achievable at volume.

Frequently Asked Questions

What is the Internal-First Feedback Loop Discipline?

The Internal-First Feedback Loop Discipline is the operating principle that the internal loop (Product communicating a disposition to CS) must close before the external loop (CS communicating that disposition to the customer) is even possible. Closing the loop with customers is not a relationship gesture. It's an operational system with two stages and explicit obligations on both sides. The internal loop runs on a monthly Product-to-CS digest. The external loop runs on a five-business-day response window from the point CS receives the disposition.

What are the three valid dispositions for customer feedback?

Every piece of structured customer feedback has exactly one of three dispositions: built (the feedback directly informed a feature or change that shipped), declined (the feedback was considered and the decision was made not to act on it, with a specific reason), or queued (acknowledged, on the radar, not scheduled for near-term, with a defined trigger for follow-up). There is no fourth option. "I don't know" means the internal loop hasn't closed yet. "We're looking into it" with no follow-up commitment is a deferral, not a disposition.

Why is closing the loop on "declined" feedback the most important disposition to deliver?

Customers who receive a specific "no and here's why" respect the transparency more than customers who receive silence. Silence on a declined item is interpreted as the feedback never being read. The "declined" response is: "We reviewed your input on [specific item]. We're not going to implement this right now, because [specific reason: scope, technical constraint, ICP fit, or strategic direction]. We appreciate the specificity you gave us." 71% of customers who stopped responding to vendor surveys cite "nothing ever changes from our feedback" as the primary reason (Medallia, 2024). Many of those stopped because declined items were never communicated, not because built items failed to show up.

How often should Product push feedback dispositions to CS?

The standard delivery rhythm is a monthly digest from PM to CS: a structured list of all items that received a disposition in the past 30 days. Plus ad hoc notifications for high-priority items. Specifically, when a feature that multiple accounts requested just shipped, CS shouldn't wait 30 days to find out. The monthly digest covers the majority; ad hoc covers urgency. The digest should be a formal calendar item, not a Slack channel that CSMs have to monitor.

What does the disposition record format look like?

The disposition record Product delivers to CS should include five fields: the feature or request name (in the same language CS used when capturing it), the customers who submitted it (account names and CSM contact), the outcome (built / declined / queued), the timeline or reason (ship date for built, specific reason for declined, next review cycle for queued), and a CSM action flag (yes or no: does this item require proactive customer outreach). That last field matters. Not every disposition warrants a standalone conversation. Some can wait for the next EBR. The action flag tells CSMs which is which.

How do you scale feedback loop closure for large CS teams?

At mid-market scale (80 accounts, 240-400 open feedback items per quarter) manual tracking in a spreadsheet collapses. The scalable version requires three system supports: feedback items logged in the CS platform with a status field (open / actioned / declined / queued), automated status updates when Product marks items as shipped, and CS Ops-owned routing so individual CSMs don't have to track the full backlog. For "built" items specifically, automated triggers that surface a CSM task when a feature ships eliminate the 30-day digest lag and ensure the proactive outreach lands while the customer still associates the response with the feedback they gave.

How does feedback loop closure affect NPS and retention?

Customers who learn that feedback they submitted led to a shipped feature are 3.8x more likely to submit detailed feedback in the next request cycle (Totango, 2024). Customers who submit structured feedback and receive no response within 60 days are 3.4x more likely to report low confidence in the vendor's product development process in their next NPS survey (Gainsight, 2024). An account that goes from five feedback items in Q1 to zero in Q3, without a closed loop for any of the Q1 items, is an account that disengaged from the relationship six months before the renewal risk appeared in the dashboard.

Learn More

Senior Operations & Growth Strategist

On this page

- What "Closing the Loop" Actually Means

- Why Most Companies Don't Do This

- The Internal Loop First: Product Closing the Loop with CS

- The External Loop: CS Closing the Loop with Customers

- Making This Scalable

- The High-Stakes Version: Beta and Co-Design Participants

- What Breaks When the Loop Stays Open

- Metrics That Prove the Loop Is Closed

- Building the Habit in a CS-Product Team Starting from Zero

- Frequently Asked Questions

- What is the Internal-First Feedback Loop Discipline?

- What are the three valid dispositions for customer feedback?

- Why is closing the loop on "declined" feedback the most important disposition to deliver?

- How often should Product push feedback dispositions to CS?

- What does the disposition record format look like?

- How do you scale feedback loop closure for large CS teams?

- How does feedback loop closure affect NPS and retention?

- Learn More