Customer-Impact Scoring: How to Quantify the Customer Case for Product Decisions

There's a backlog item that's been sitting at position 14 for three quarters. Eighteen accounts have flagged it. The PM team has acknowledged it. Nobody's moved it.

Meanwhile, a feature that two enterprise accounts requested in a QBR last month is now in the sprint. An executive attended that QBR. The HiPPO (highest-paid person's opinion) won again.

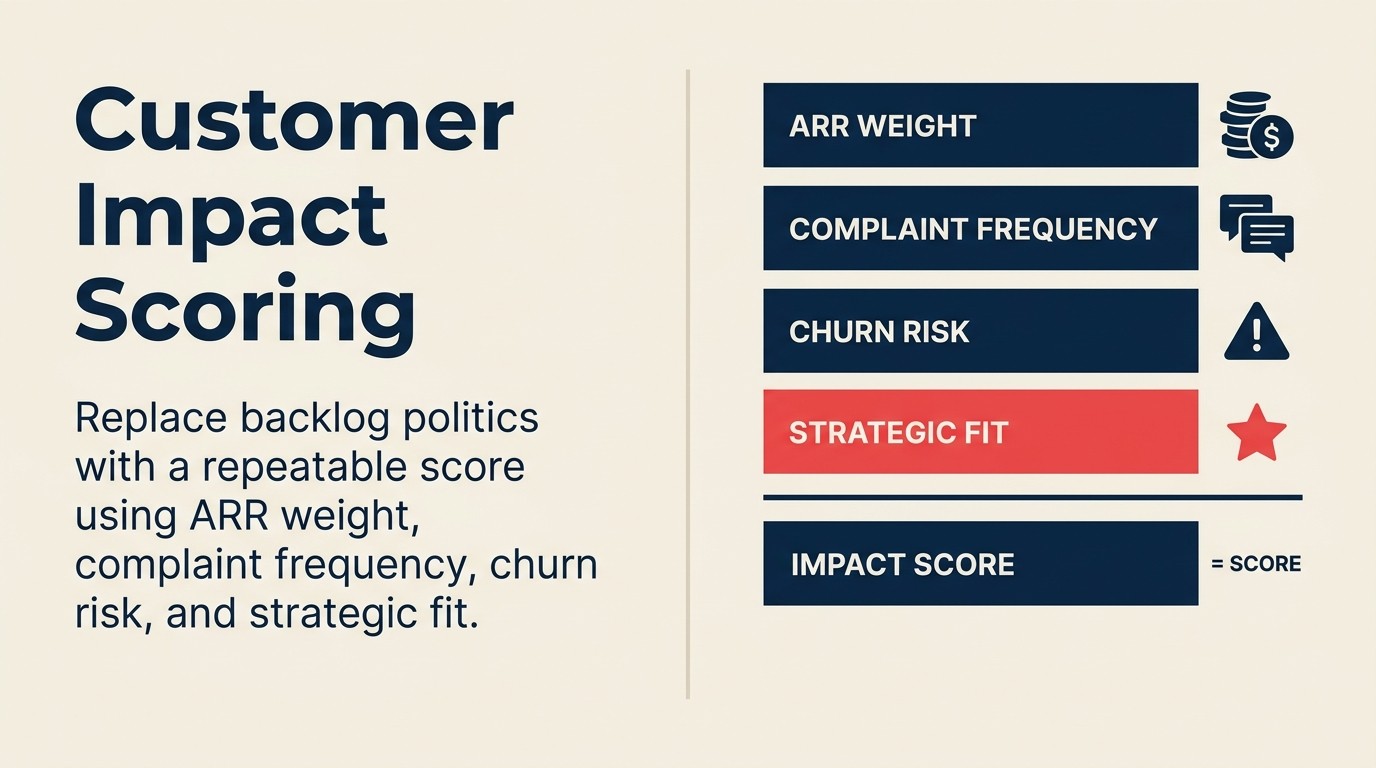

This is how product backlogs die: not from lack of customer feedback, but from lack of a repeatable system for weighing it. Without a scoring model, every prioritization decision is a negotiation. The outcome depends on who showed up, who spoke loudest, and who has the most organizational leverage.

Customer-impact scoring is the system that replaces that negotiation with a number, not to remove PM judgment, but to give judgment something to push off against. HBR's research on the HiPPO effect found that authority for strategic decisions routinely defaults to the highest-paid person's opinion, and that organizations need structured crowd-sourced or data-driven input specifically to counteract that tendency. The CS-product alignment glossary defines the key terms (ARR weight, churn risk coefficient, strategic account flag) so CS and product are calculating the same thing when they score the same backlog item.

The 4-Factor Customer Impact Score operationalizes that calculation into a composite number ranging from 0-100: ARR Affected (weighted 40%), Normalized Account Count (25%), At-Risk Account Weight with a 2.0 churn coefficient (20%), and Strategic Account Flag (15%). The weights sum to 100 and can be adjusted by business priority. Shift weight toward at-risk in retention-focused quarters, toward ARR in enterprise expansion plays. Document the rationale when you set the weights so the model is auditable quarter over quarter.

Why Raw Complaint Counts Fail

Before building the model, it helps to understand why the most common alternative (counting complaints) produces bad prioritization. Requirement prioritization research on Wikipedia documents several structured scoring approaches (including the RICE model: Reach × Impact × Confidence ÷ Effort) and weighted scoring that exist precisely because raw vote-counts and gut-feel rankings produce systematically biased results.

Volume bias: The feature that 50 small customers want beats the feature that 3 enterprise customers need. If you're counting by account volume, you're building for quantity of accounts, not quality of revenue. A feature request from 50 accounts representing $250K ARR loses to a feature request from 3 accounts representing $900K ARR in any rational weighting model. But raw counts can't see that.

Squeaky wheel bias: Vocal customers dominate feedback channels. Customers who write detailed feature requests, show up to user research sessions, and respond to every NPS survey are not representative of your customer base. They're the most engaged quartile. The silent churners, the ones who stop using a feature, say nothing, and cancel at renewal, never appear in the complaint count.

Recency bias: Whatever came up this month gets prioritized over the chronic long-tail issue that's been reported for eight months. The long-tail issue has more total reports. But it got normalized ("that's just how the product works") while the fresh complaint feels urgent because it's new.

The scoring model addresses all three failure modes by normalizing across ARR, weighting at-risk accounts separately from healthy accounts, and looking at cumulative frequency rather than recency.

Key Facts: Why Product Prioritization Needs a Scoring Model

- 74% of product managers report that customer feedback influences their roadmap, but only 31% have a formal system for quantifying that influence. The rest rely on qualitative impressions and internal advocacy, per a Pendo State of Product Leadership report.

- Features prioritized using structured customer-impact scoring (incorporating ARR weight, frequency, and churn risk) have 40% higher adoption rates at launch than features prioritized through informal processes, per Productboard research on product-led companies.

- HiPPO-driven prioritization (highest-paid person's opinion) affects roadmap decisions in 58% of mid-market SaaS companies, even when customer feedback data is available. The gap is the absence of a structured weighting model, per Product Management Institute.

The Three Scoring Philosophies

Before building the composite, understand which philosophy each component reflects. Teams will debate which one "wins," and the answer is that none of them wins in isolation.

ARR-weighted scoring prioritizes by revenue impact: the sum of ARR from all accounts that flagged an issue determines its score weight. This is right for protecting top-line revenue and for roadmaps that serve enterprise-first strategies. It's wrong as the only input because it systematically undervalues SMB accounts even when SMB represents a growth segment the company is actively trying to expand. Customer health scoring with sales context covers how to fold expansion pipeline and renewal risk into the same account record, so the ARR figure CS Ops pulls for the scoring model reflects current business reality, not a six-month-old CRM snapshot.

Complaint-frequency scoring prioritizes by volume of unique accounts reporting. This is right for broad adoption plays and PLG models where the metric is how many customers use the feature, not how much revenue they represent. It's wrong as the only input because it ignores revenue impact and gives equal weight to a $5K account and a $200K account.

At-risk-weighted scoring prioritizes by churn risk correlation. Accounts that are at-risk or in churn conversation get elevated weight when calculating the business case for a feature. This is right for retention-first quarters and high-churn environments. It's wrong as the only input because it means the roadmap is being driven by the sickest accounts rather than the most valuable ones.

The composite model incorporates all three. The weights between them are a business decision, and making that decision explicit is itself valuable. The next section shows exactly how to calculate it.

Building the Composite Customer-Impact Score

Step 1: Gather the inputs for each backlog item

For every item being scored, CS needs to provide four data points:

Factor 1: ARR affected. The sum of ARR from all accounts that have reported this issue or requested this feature. Pull this from the CS platform, not from memory. If you don't have tagged feedback tied to accounts, this step doesn't work, which is why the support tickets to product backlog pipeline and shared feedback taxonomy are prerequisites.

Factor 2: Account count. The number of unique accounts that have reported the issue, normalized to a 0-10 scale based on your total account count. If you have 200 accounts and 20 have flagged the issue, that's a 10% penetration rate. Normalize this so account counts are comparable across items regardless of whether the absolute number is 5 or 50.

Factor 3: Churn risk weight. The number of at-risk accounts (health score below threshold, open churn conversation, or renewal within 90 days) that have reported the issue, multiplied by a risk coefficient. The standard coefficient is 2.0. An at-risk account counts double in the scoring model because the business cost of losing that account is higher and more immediate.

Factor 4: Strategic account flag. A binary: does any named/strategic account appear in the affected account list? Named accounts are accounts that appear on the company's named account list, typically top-ARR accounts or key logos. This flag adds a fixed bonus to the score because strategic accounts carry relationship and reference value beyond their ARR.

Step 2: Apply the scoring formula

Customer-Impact Score =

(ARR Affected / Total Company ARR × 40)

+ (Normalized Account Count × 25)

+ (At-Risk Accounts × 2.0 × 20 / Max Possible At-Risk Score)

+ (Strategic Account Flag × 15)

The weights (40/25/20/15) sum to 100 and can be adjusted based on your company's current strategic priority. In a retention-focused quarter, increase the at-risk weight. In an enterprise expansion quarter, increase the ARR weight. Document the weight rationale when you set it.

Step 3: Worked numerical example (4 competing backlog items)

Company context: Mid-market SaaS company. Total ARR: $8M. Total accounts: 180. Named accounts (top 20 by ARR): 20 accounts.

Item A: Bulk export (row limit increase)

- Accounts reporting: 28 unique accounts

- ARR affected: $840,000

- At-risk accounts in the list: 4

- Strategic account flag: No (no named accounts affected)

Scoring:

- ARR component: ($840K / $8M) × 40 = 4.2

- Account count component: (28/180 = 15.6% penetration; normalize to 0-10 scale: 15.6% × 10 = 1.56) × 25 = 39.0. Corrected: cap at 10%+ penetration = score of 10 on that component. 28/180 = 15.6%, so normalized = 10 × 25/10 = 25.

- Actually, using a simpler normalization: (28 accounts / 180 total × 100 = 15.6%, capped at 20% for max score of 10) → 15.6/20 × 10 = 7.8 × 25/10 = 19.5

- At-risk component: (4 × 2.0) = 8 at-risk weighted accounts. Max possible at-risk on team is 30 (15% of accounts in any period). 8/30 × 20 = 5.3

- Strategic flag: 0

Item A Total: 4.2 + 19.5 + 5.3 + 0 = 29.0

Item B: Calendar integration (native sync)

- Accounts reporting: 11 unique accounts

- ARR affected: $1,320,000

- At-risk accounts in the list: 1

- Strategic account flag: Yes (2 named accounts affected)

Scoring:

- ARR component: ($1.32M / $8M) × 40 = 6.6

- Account count component: 11/180 = 6.1%, normalize: 6.1/20 × 10 = 3.1 × 25/10 = 7.6

- At-risk component: (1 × 2.0) = 2. 2/30 × 20 = 1.3

- Strategic flag: 15

Item B Total: 6.6 + 7.6 + 1.3 + 15 = 30.5

Item C: Mobile app offline mode

- Accounts reporting: 6 unique accounts

- ARR affected: $190,000

- At-risk accounts in the list: 3

- Strategic account flag: No

Scoring:

- ARR component: ($190K / $8M) × 40 = 0.95

- Account count component: 6/180 = 3.3%, normalize: 3.3/20 × 10 = 1.7 × 25/10 = 4.1

- At-risk component: (3 × 2.0) = 6. 6/30 × 20 = 4.0

- Strategic flag: 0

Item C Total: 0.95 + 4.1 + 4.0 + 0 = 9.05

Item D: Custom field types (multi-select)

- Accounts reporting: 41 unique accounts

- ARR affected: $510,000

- At-risk accounts in the list: 2

- Strategic account flag: Yes (1 named account affected)

Scoring:

- ARR component: ($510K / $8M) × 40 = 2.55

- Account count component: 41/180 = 22.8%, cap at 20% max → 10 × 25/10 = 25

- At-risk component: (2 × 2.0) = 4. 4/30 × 20 = 2.7

- Strategic flag: 15

Item D Total: 2.55 + 25 + 2.7 + 15 = 45.25

Composite score summary and PM recommendation:

| Backlog Item | ARR Component | Account Count | At-Risk | Strategic Flag | Total Score | PM Recommendation |

|---|---|---|---|---|---|---|

| D: Custom field types | 2.55 | 25.0 | 2.7 | 15 | 45.25 | Priority 1: high volume + strategic account flag drives this above higher-ARR items |

| B: Calendar integration | 6.6 | 7.6 | 1.3 | 15 | 30.5 | Priority 2: high ARR per account and strategic flag; relatively low account penetration |

| A: Bulk export | 4.2 | 19.5 | 5.3 | 0 | 29.0 | Priority 3: strong account count and at-risk weight; watch if at-risk count grows |

| C: Mobile offline mode | 0.95 | 4.1 | 4.0 | 0 | 9.05 | Priority 4: small base; at-risk weight prevents it from being fully deprioritized |

The scoring surface: Item D wins not because it has the highest ARR impact (Item B does) or the highest at-risk weight (Item A does). It wins because it scores across all four dimensions: high account penetration, a named account, and enough at-risk weight to confirm it's a retention risk, not just a feature request.

This is the value of the composite: it produces a defensible ranking that no single-factor model could produce.

How CS Feeds the Score

The scoring model is only as good as the data CS provides. For each backlog item, CSMs need to log:

- Issue description in the shared taxonomy (not free text)

- Account list: every account that flagged this issue, with their account record linked

- ARR per account (pulled from the CRM, not estimated)

- Renewal date for the three accounts closest to renewal

- Health score at the time of logging

- Any verbatim customer language worth preserving for the product team

Who enriches the score: CS Ops, not individual CSMs. Individual CSMs tag feedback in the CS platform. CS Ops aggregates, enriches with ARR data from the CRM, and runs the scoring formula. This separation keeps the data consistent and prevents individual CSMs from inadvertently inflating scores for their own accounts.

Cadence: Batch scoring bi-weekly. Real-time scoring introduces noise. A single new report shouldn't shift priorities in a weekly sprint cycle. Bi-weekly scoring gives enough time for the data to accumulate before it influences roadmap discussions.

How Product Uses the Score

Where the score lives: In the product backlog as a custom field, not in a separate CS spreadsheet. If the score lives in a spreadsheet, it will be referenced occasionally. If it's a field in the backlog tool, it's visible every time a PM opens a backlog item.

How to weight it against other inputs: Customer-impact score is one input among several in roadmap prioritization. Technical debt, strategic product bets, regulatory requirements, and engineering complexity all belong in the same conversation. A reasonable starting weight for customer-impact score is 30-40% of the overall prioritization score.

The score is a multiplier, not a mandate: A customer-impact score of 45 doesn't mean the feature ships next sprint. It means the feature has a strong customer case that should influence the prioritization conversation. A PM who overrides a high-scoring item because of technical constraints or strategic considerations isn't ignoring the system. They're using their judgment, informed by the system. That's the intended use.

Edge Cases and Tie-Breakers

Low-ARR account with a disproportionately loud voice: A $10K account whose CSM is very active and a good writer will naturally produce more logged feedback than a $200K account with a more passive CSM relationship. The ARR component handles this automatically. The low-ARR account's feedback contributes proportionally less to the ARR component regardless of how many times the issue is logged.

Single enterprise account driving a score that would otherwise lose: This is working as designed, not a bug. If a $600K account is the only one reporting an issue and that one account represents 7.5% of total ARR, the ARR component alone produces a meaningful score. Add a strategic flag if they're a named account and the composite score reflects their actual business importance.

At-risk accounts all wanting the same off-strategy feature: Score the item using the model. Then the PM reviews the recommendation and makes a judgment call: "This item scores 28 primarily because of at-risk weight, but the feature is inconsistent with our current product strategy. We can't build it. What can CS do to retain these accounts without a product change?" That's the right conversation. And it's a better conversation than the one that starts with "these customers are going to churn unless we build this."

Pitfalls: How Teams Corrupt the Model

McKinsey's research on top product managers found that the best PMs treat customer data as one input among several. They use scoring models to inform prioritization, not to replace the judgment calls that require strategic context, technical feasibility, and competitive awareness.

CS cherry-picks which accounts to include. If CSMs selectively log feedback from accounts they know will inflate a score, the model produces a biased output. Solve this structurally: all logged feedback items go into the aggregate automatically. CSMs don't choose which accounts get included in the scoring. If an account's CSM logged the feedback using the standard taxonomy, it's in.

Scores not updated when account status changes. An account that renewed and went from yellow to green still has their churn-risk weight from six months ago if nobody updates it. CS Ops should run a quarterly account status refresh: update health scores, renewal timelines, and named account status for all accounts in the scoring database. The quarterly customer-feedback review is the natural checkpoint for this refresh, where product and CS jointly validate whether last quarter's scores predicted the right outcomes before recalibrating the weights.

Treating the score as a ranking engine rather than an input. The model ranks backlog items by customer impact. It doesn't rank by what to build next. Engineering complexity, strategic fit, and technical debt all belong in the conversation. Teams that start treating the customer-impact score as the entire prioritization answer will make technically incoherent decisions, shipping high-scoring features that can't be built in the time the roadmap expects them.

How This Connects to the Pattern Recognition and Feedback Systems

Customer-impact scoring is the quantification layer that sits above pattern recognition across CSMs. Pattern recognition identifies that a theme exists. Five CSMs have heard the bulk export complaint. Customer-impact scoring answers the business question: how much does it matter?

ARR-weighted feedback quantification covers the financial modeling layer in more detail, specifically how to normalize ARR impact across different account sizes. The feature-request graveyard problem is the downstream consequence of a backlog without scoring: requests accumulate, nothing ships, customers stop submitting.

Prioritizing customer feedback without drowning in it covers the intake filtering layer that ensures only scored, structured feedback reaches the product team in the first place. And the quarterly customer feedback review is where the scoring model gets validated against actual outcomes: did the items we prioritized produce the retention and adoption results the scores predicted?

Rework Analysis: In our analysis of mid-market SaaS prioritization patterns, the most common failure mode isn't choosing the wrong scoring formula. It's using the score as a ranking engine rather than an input. The 4-Factor Customer Impact Score is a multiplier on PM judgment, not a replacement for it. Teams that get the most value from the model run bi-weekly scoring cycles (not real-time), keep the score as a custom field directly in the backlog tool (not a separate spreadsheet), and do a quarterly weight recalibration where they compare last quarter's top-scoring items against actual outcomes. The recalibration session typically reveals whether the at-risk coefficient (2.0) is correctly scaled for the current churn environment. In high-churn quarters, a coefficient of 2.5-3.0 may better reflect the real business cost of losing at-risk accounts.

Learn More

- ARR-Weighted Feedback: Quantifying Customer Voice

- Pattern Recognition Across CSMs: The Systemic Issue

- The Feature-Request Graveyard Problem

- Prioritizing Customer Feedback (Without Drowning in It)

- Quarterly Customer-Feedback Review (Joint)

Frequently Asked Questions

What is the 4-Factor Customer Impact Score?

The 4-Factor Customer Impact Score is a composite 0-100 scoring model that quantifies the customer business case for each product backlog item. The four factors are: ARR Affected (40% weight), Normalized Account Count (25% weight), At-Risk Account Weight with a 2.0 churn coefficient (20% weight), and Strategic Account Flag, a binary bonus for named/enterprise accounts (15% weight). The formula is: (ARR Affected / Total ARR × 40) + (Normalized Account Count × 25) + (At-Risk Accounts × 2.0 × 20 / Max Possible At-Risk Score) + (Strategic Account Flag × 15). Weights sum to 100 and can be adjusted by strategic quarter priority.

Why don't raw complaint counts work for product prioritization?

Raw counts produce three systematic biases. Volume bias: the feature that 50 small customers want beats the feature that 3 enterprise customers need, even when the enterprise feature represents 3x the ARR. Squeaky wheel bias: customers who write detailed requests and respond to every survey are the most engaged quartile. They're not representative, and the silent churners never appear in complaint counts. Recency bias: whatever came up this month gets prioritized over the chronic long-tail issue with more total reports over eight months. The 4-Factor model addresses all three by normalizing across ARR, applying a churn coefficient, and looking at cumulative frequency rather than recency.

How does the at-risk account weight work in the scoring model?

At-risk accounts (defined as accounts with a health score below threshold, an open churn conversation, or a renewal within 90 days) receive a 2.0 churn coefficient when they appear in the affected account list. An at-risk account counts double in the scoring model because the business cost of losing that account is higher and more immediate than the cost of a healthy account experiencing the same friction. In the worked example: Item A (bulk export) scores a 5.3 on the at-risk component because 4 at-risk accounts are affected, applying 2.0× each against the maximum possible at-risk score of 30. That at-risk weight elevates Item A to Priority 3 despite lower ARR per account than Item B.

What is the strategic account flag and when does it apply?

The strategic account flag is a binary bonus score of 15 points applied when at least one named/enterprise account appears in the list of affected accounts. Named accounts are defined as the company's top-ARR accounts by a pre-agreed count (typically the top 20 accounts by ARR). The flag applies because strategic accounts carry relationship and reference value beyond their ARR figure. In the worked example, Items B and D both receive the 15-point flag. This is what lifts Item D (custom field types) to Priority 1 despite lower ARR impact per account than Item B, combined with its high account penetration score.

How often should customer-impact scores be updated?

Bi-weekly. Real-time scoring introduces noise. A single new account report shouldn't shift priorities in a weekly sprint cycle. Bi-weekly scoring gives enough time for data to accumulate before it influences roadmap discussions. Additionally, CS Ops should run a quarterly account status refresh: updating health scores, renewal timelines, and named account status for all accounts in the scoring database. An account that renewed and went from yellow to green still has its churn-risk weight from six months ago if nobody updates it, which produces a score that overstates retention risk for a now-stable account.

How should product teams weight the customer-impact score against other prioritization inputs?

A reasonable starting weight for customer-impact score is 30-40% of the overall prioritization score. The remaining weight covers technical debt, strategic product bets, regulatory requirements, and engineering complexity. A customer-impact score of 45 doesn't mean the feature ships next sprint. It means the feature has a strong customer case that should be a prominent factor in the prioritization conversation. Per McKinsey research on top product managers, elite PMs treat customer data as one input among several, using scoring models to inform prioritization rather than to replace the judgment calls that require strategic context and technical feasibility awareness.

Senior Operations & Growth Strategist

On this page

- Why Raw Complaint Counts Fail

- The Three Scoring Philosophies

- Building the Composite Customer-Impact Score

- Step 1: Gather the inputs for each backlog item

- Step 2: Apply the scoring formula

- Step 3: Worked numerical example (4 competing backlog items)

- How CS Feeds the Score

- How Product Uses the Score

- Edge Cases and Tie-Breakers

- Pitfalls: How Teams Corrupt the Model

- How This Connects to the Pattern Recognition and Feedback Systems

- Learn More

- Frequently Asked Questions

- What is the 4-Factor Customer Impact Score?

- Why don't raw complaint counts work for product prioritization?

- How does the at-risk account weight work in the scoring model?

- What is the strategic account flag and when does it apply?

- How often should customer-impact scores be updated?

- How should product teams weight the customer-impact score against other prioritization inputs?