More in

Playbook do Processo de Vendas

O Handoff SDR para AE: Como Documentá-lo Para que Funcione de Verdade

abr 18, 2026

Fundamentos do Deal Desk: Como Equipes de Vendas Menores Podem Aprovar e Fechar Mais Rápido

abr 18, 2026

Commit, Best Case, Pipeline: Definindo Termos de Forecast que Toda a Equipe Usa da Mesma Forma

abr 18, 2026

Análise de Win/Loss Sem Viés: Um Processo Repetível para Equipes de Vendas

abr 18, 2026 · Currently reading

Construindo um Processo de Vendas um Tipo de Negócio por Vez

abr 18, 2026

Multi-Threading em Negócios Sem Irritar o Seu Champion

abr 18, 2026

A Estagnação no Meio do Pipeline: Como Diagnosticar e Resolver Negócios Parados

abr 18, 2026

Planos de Ação Mútua: Quando Ajudam e Quando Atrapalham

abr 18, 2026

Cadência Operacional de Vendas: Do Daily Stand-Up ao QBR Trimestral

abr 18, 2026

Cadência de Forecast: Semanal, Mensal ou Contínua — O que Funciona e Quando

abr 6, 2026

Análise de Win/Loss Sem Vieses: Um Processo Repetível para Times de Vendas

Quando um rep perde um deal, diz "preço". Quando ganha, diz "relacionamento". Ambas as respostas provavelmente estão erradas, e ambas parecem completamente verdadeiras para o rep que viveu aquele deal.

Não é desonestidade. É um problema estrutural. Reps estão próximos demais dos seus deals para analisá-los objetivamente. Eles têm interesses emocionais reais no resultado e um incentivo real para racionalizar o que aconteceu de uma forma que não reflita mal no seu esforço ou julgamento. O CRM reforça isso. Os motivos de perda geralmente são um menu dropdown que um rep preenche cinco minutos após uma chamada ruim, escolhendo a opção que parece menos "errei neste deal".

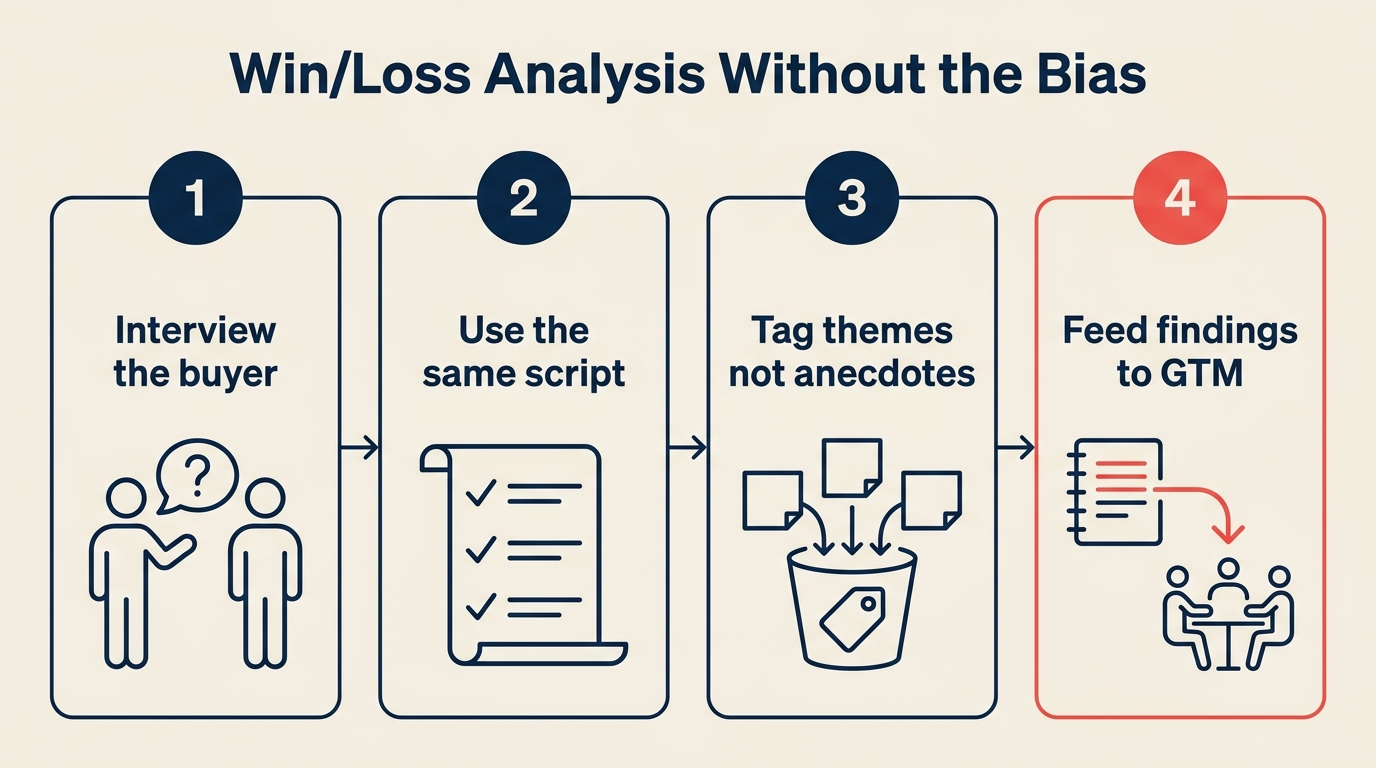

A análise de win/loss só produz inteligência acionável se você separar a coleta de dados das pessoas mais comprometidas com o resultado. Uma pesquisa da Forrester sobre programas de buyer intelligence descobriu que empresas com programas formais de win/loss melhoram seu win rate competitivo em 15 a 30% ao longo de dois anos. Veja um processo repetível que faz isso. E ele combina bem com seu processo de revisão de deals perdidos: o debrief interno e a entrevista com o comprador têm propósitos diferentes e ambos valem ser executados.

As Quatro Mentiras Que Reps Contam Após Todo Deal

Não são maliciosas. São reflexivas. Mas vale nomeá-las porque aparecem consistentemente em todos os times:

Após uma perda:

- "Perdemos por preço." (Motivo real: o valor não foi estabelecido, então o preço virou o ponto de decisão)

- "O champion perdeu apoio interno." (Motivo real: o rep não fez multi-threading e tinha um único ponto de falha)

- "Foram com o fornecedor atual." (Frequentemente verdade, mas raramente investigado adiante. Por que o fornecedor atual ganhou?)

- "Não era o momento certo." (Uma objeção de timing na etapa final geralmente é uma versão educada de "não vimos valor suficiente para avançar")

Após uma vitória:

- "Ganhamos pelo relacionamento." (Relacionamentos importam, mas não são o motivo pelo qual compradores gastam orçamento)

- "Nosso produto era claramente melhor." (Concorrentes acreditam no mesmo)

O CRM agrava isso. Quando os motivos de perda são auto-reportados e selecionados de uma lista curta, os times acumulam meses de perdas por "preço" e tiram as conclusões erradas: "Precisamos ser mais baratos." Mas o padrão real pode ser que deals sem contato executivo fecham a 20% enquanto aqueles com ele fecham a 65%. Esse sinal desaparece em um dropdown.

Passo 1: Separe a Coleta de Dados do Coaching

A decisão estrutural mais importante no seu processo de win/loss é quem conduz a entrevista com o comprador.

Não deve ser o gestor direto do rep.

Quando um gestor liga para um comprador após uma perda, o comprador sabe que está falando com o chefe do rep. Ele vai suavizar o feedback, evitar especificidades que possam prejudicar o rep e dar respostas polidas em vez de honestas. A mesma dinâmica acontece com vitórias. Compradores contarão a uma pessoa sênior o que acham que ela quer ouvir.

Opções que funcionam melhor:

- Um analista de sales operations sem vínculo com quota

- Um customer success manager que não estava envolvido no deal

- Um terceiro neutro (para contas de alto valor, alguns times usam um pesquisador externo)

- Um colega de vendas que não trabalhou no deal

O objetivo do entrevistador é capturar o que o comprador realmente pensa: não para treinar o rep, não para explicar a posição da empresa, não para salvar o deal. Apenas para ouvir e registrar.

Essa separação também protege o rep. Feedback de um comprador que passa por um terceiro neutro chega diferente do que feedback que vem do gestor após uma chamada de perda. Parece inteligência de mercado, não crítica de desempenho.

Passo 2: O Framework de Entrevista com o Comprador, Oito Perguntas

A sequência importa. Comece com perguntas que o comprador pode responder facilmente e avance em direção às que exigem mais franqueza.

Uma pesquisa publicada na Harvard Business Review sobre métodos de feedback de clientes mostra que compradores são significativamente mais francos quando entrevistados por um terceiro neutro do que quando abordados diretamente por um rep de vendas ou de conta.

Abertura (fácil, factual):

"Você pode me contar como funcionou seu processo de avaliação? Quem esteve envolvido na decisão?" Estabelece o mapa de stakeholders e se o rep identificou todos os jogadores relevantes.

"Quais foram os dois ou três critérios mais importantes para seu time ao entrar nessa avaliação?" Compare a resposta com o que o rep registrou. Se o rep não tinha os critérios principais, isso é uma falha de qualificação.

Meio (onde o sinal real está):

"O que [sua empresa] fez bem durante o processo de avaliação?" Mesmo em uma perda, compradores reconhecerão pontos fortes. Isso revela o que realmente está funcionando.

"O que poderíamos ter feito diferente?" A maioria dos compradores responde isso se o relacionamento foi cordial. A resposta é quase sempre mais específica do que "preço".

"Como você avaliou as diferentes opções que estava considerando?" Chega aos critérios de avaliação e ao que o comprador estava comparando, não apenas "nós vs. eles".

"O que no final fez a diferença na decisão?" O fator decisivo. Compare com o que o rep reportou.

Encerramento (exige mais franqueza):

"Teve algo no nosso processo ou na nossa equipe que tornou a avaliação mais difícil do que precisava ser?" Revela falhas de processo: follow-up lento, propostas confusas, precificação pouco clara, stakeholders perdidos.

"Há algo mais que você gostaria que soubéssemos e que pudesse nos ajudar a melhorar?" Aberta. Alguns compradores dirão exatamente o que você precisa ouvir se você der espaço.

O que NÃO perguntar:

- Não pergunte "precificamos corretamente?" (influencia a testemunha)

- Não pergunte "o que [concorrente] prometeu a você?" (parece defensivo)

- Não pergunte nada que pareça que você está tentando reabrir o deal

Passo 3: Estrutura do Debrief Interno

O debrief interno com o rep é separado da entrevista com o comprador e acontece depois que você tem os dados da entrevista. Não execute os dois ao mesmo tempo.

Pauta (30 a 40 minutos):

5 min: Defina o contexto. Não é uma revisão de desempenho. É uma sessão de aprendizado. O objetivo é entender o deal bem o suficiente para melhorar o próximo.

10 min: Perspectiva do rep. Peça ao rep para descrever o que ele acha que dirigiu o resultado, sem interromper. Apenas ouça. Você vai comparar com as respostas do comprador em seguida.

10 min: Compare com os dados do comprador. Apresente os resultados da entrevista com o comprador sem atribuição: "O comprador mencionou que os critérios de avaliação incluíam [X]. Você tinha isso no seu radar?" Você não está dizendo "o comprador nos contou que você perdeu isso." Está explorando a lacuna.

10 min: Causa raiz. Escolha as duas maiores lacunas entre o que o rep achava que aconteceu e o que o comprador reportou. Pergunte: "O que precisaria ter sido verdade para você saber sobre [lacuna] mais cedo no deal?"

5 min: Um aprendizado. Pergunte ao rep o que faria diferente em um momento específico do deal se pudesse voltar. Esse é o gancho de coaching.

Passo 4: Reconhecimento de Padrões ao Longo do Tempo

A análise de deals individuais é útil. O reconhecimento de padrões é onde a análise de win/loss se paga.

De acordo com a análise da Gartner sobre práticas de inteligência competitiva, times de vendas B2B que categorizam e revisam sistematicamente os motivos de perda têm 2x mais chances de identificar falhas de processo corrigíveis do que aqueles que dependem de dados de CRM auto-reportados pelos reps.

Tamanho mínimo de amostra antes de tirar conclusões: 10 a 15 deals por padrão analisado.

Um rep perdendo cinco deals por causa de uma objeção específica pode significar algo sobre aquele rep. Dez reps perdendo para o mesmo concorrente com a mesma objeção significa algo sobre seu posicionamento competitivo.

Taxonomia de categorização de perdas:

Categorize cada perda em um motivo primário (da entrevista com o comprador, não do relatório do rep):

| Categoria | Descrição |

|---|---|

| Perda competitiva | Comprador escolheu um concorrente nomeado |

| Status quo | Comprador decidiu não mudar nada |

| Orçamento | O deal era genuíno, mas o orçamento foi cortado ou estava indisponível |

| Fit de processo | Produto ou implementação não se encaixava no workflow deles |

| Champion interno | Champion saiu, perdeu apoio ou foi sobreposto |

| Falha de avaliação | Rep não chegou a todos os tomadores de decisão |

| Timing | Decisão empurrada para um período futuro (não é uma perda real, acompanhe separadamente) |

Após 15 a 20 entrevistas, observe quais categorias aparecem com mais frequência. Se "falha de avaliação" (rep não chegou a todos os tomadores de decisão) é seu principal motivo de perda, isso é um problema de qualificação e multi-threading, não um problema de precificação. A prioridade de coaching é diferente.

Passo 5: A Camada de Inteligência Competitiva

Entrevistas de win/loss são uma das melhores fontes de inteligência competitiva que você tem. Mas use-as com cuidado.

O que extrair:

- O que o concorrente prometeu que seu time não prometeu

- Como o concorrente enquadrou a comparação

- O que o concorrente fez no processo de vendas ao qual o comprador respondeu positivamente

Onde ser cauteloso:

- Compradores frequentemente transmitem afirmações de concorrentes que não são precisas

- A percepção de um único comprador sobre um concorrente não é a verdade do mercado

- Inteligência competitiva deve informar o posicionamento, não provocar pânico

Construa um documento corrente de temas competitivos a partir das suas entrevistas de win/loss. Após 10 entrevistas envolvendo um concorrente específico, você terá um padrão. Esse padrão vale compartilhar com seu time de produto e seu time de marketing, pois frequentemente reflete lacunas reais de produto ou posicionamento.

Não deixe a inteligência competitiva distorcer seu processo. Se você mudar seus critérios de qualificação ou playbook com base no pitch de um concorrente em dois deals, está perseguindo ruído. Espere o padrão.

Passo 6: Fechar o Loop

A análise de win/loss só tem valor se os achados mudarem algo. Um processo que produz insights que ninguém lê é um desperdício do tempo de todos.

Defina três outputs para cada lote de análise de win/loss (trimestral ou mensal):

Output 1: Atualizações do playbook. Uma ou duas mudanças específicas no seu sales playbook com base no que você aprendeu. Se "compradores frequentemente não entenderam nosso cronograma de implementação" é um padrão, o playbook precisa de uma seção sobre como definir expectativas de prazo no discovery.

Output 2: Revisão dos critérios de qualificação. Se deals consistentemente estão sendo perdidos porque o rep não identificou um tomador de decisão chave, isso é uma falha de qualificação. Atualize seu checklist de qualificação para exigir mapeamento de stakeholders antes de avançar além de uma etapa de pipeline específica.

Output 3: Prioridades de coaching. Qual lacuna de habilidade específica aparece com mais frequência nos deals perdidos? Esse é o tema de coaching do Q2 para o time.

Os achados devem ir para o gestor de vendas e para o VP. Não devem ir diretamente para o rep sem uma conversa de coaching antes. Feedback bruto de comprador sem contexto pode desmoralizar em vez de desenvolver.

Armadilhas Comuns

Analisando apenas perdas recentes. O viés de recência produz conclusões baseadas nos últimos 30 dias de deals em vez dos últimos 6 meses. Execute sua análise nos últimos 20 a 30 deals fechados, não apenas nos mais frescos.

Dependendo de motivos auto-reportados pelos reps. Os motivos de perda no dropdown do CRM são um ponto de partida, não uma conclusão. Todo programa sério de win/loss complementa os dados do CRM com entrevistas com compradores.

Tratar toda perda como falha de processo. Alguns deals são perdidos porque o concorrente era genuinamente melhor para aquele comprador. Isso é informação, não falha. Não sobre-engenharie seu processo tentando ganhar deals que provavelmente não deveria ganhar.

Ignorar vitórias. A análise de wins é tão importante quanto a de perdas. Quando reps não sabem por que ganham, não conseguem replicar isso de forma confiável. Um estudo da McKinsey sobre desempenho de vendas B2B descobriu que organizações de vendas de alto desempenho revisam deals ganhos com o mesmo rigor das perdas, usando os dados para codificar comportamentos repetíveis em vez de atribuir o sucesso ao talento individual. Entreviste compradores após vitórias significativas com o mesmo rigor que aplica às perdas.

Templates de Entrevista de Win/Loss

Banco de Perguntas para Entrevista com Comprador (para uso do entrevistador):

ABERTURA

1. Pode me contar como funcionou seu processo de avaliação?

2. Quais eram seus 2 ou 3 critérios de seleção principais?

MEIO

3. O que [Empresa] fez bem durante o processo?

4. O que poderíamos ter feito diferente?

5. Como você comparou as opções que estava considerando?

6. O que no final fez a diferença na sua decisão?

ENCERRAMENTO

7. Teve algo no nosso processo que tornou sua avaliação mais difícil?

8. Algo mais que você gostaria que soubéssemos?

Log de Categorização de Perdas:

Deal: [Empresa] | Data: | AE:

Motivo primário de perda reportado pelo comprador:

Categoria: [ ] Competitiva [ ] Status quo [ ] Orçamento [ ] Fit de processo

[ ] Champion [ ] Falha de avaliação [ ] Timing

Motivo reportado pelo rep (CRM):

Lacuna entre perspectiva do rep e do comprador:

Tag de padrão (se aplicável):

O Que Fazer a Seguir

Antes de construir um programa completo de win/loss, faça um piloto pequeno: cinco entrevistas com compradores de perdas do último trimestre, conduzidas por alguém que não seja o gestor direto do rep.

Escolha as cinco perdas onde você tem genuine curiosidade sobre o motivo real, não aquelas onde o resultado foi óbvio. Prepare o framework de oito perguntas. Envie uma nota breve do VP pedindo 20 minutos de feedback ao comprador.

Após cinco entrevistas, você terá mais insight sobre seus drivers reais de win/loss do que seis meses de análise de dados do CRM proporcionam. É assim que você constrói o caso internamente para criar o processo completo. Para o lado do CRM disso, o acompanhamento de acurácia de forecast nas suas bibliotecas de gestão de pipeline aborda como estruturar a coleta de dados que torna possível o reconhecimento de padrões.

Saiba Mais

Head of Enterprise Solutions

On this page

- As Quatro Mentiras Que Reps Contam Após Todo Deal

- Passo 1: Separe a Coleta de Dados do Coaching

- Passo 2: O Framework de Entrevista com o Comprador, Oito Perguntas

- Passo 3: Estrutura do Debrief Interno

- Passo 4: Reconhecimento de Padrões ao Longo do Tempo

- Passo 5: A Camada de Inteligência Competitiva

- Passo 6: Fechar o Loop

- Armadilhas Comuns

- Templates de Entrevista de Win/Loss

- O Que Fazer a Seguir