More in

Panduan Produktivitas Tim

Menjalankan 1:1 yang Produktif dan Benar-Benar Dinantikan Anggota Tim

Apr 18, 2026

Focus Block di Level Tim, Bukan Hanya Individu

Apr 18, 2026

Update Status Mingguan Tanpa Basa-Basi

Apr 18, 2026

Kickoff Proyek yang Mencegah Scope Creep

Apr 18, 2026

Framework Prioritisasi yang Mudah Diingat oleh Tim

Apr 18, 2026 · Currently reading

Mengelola Tim yang Tersebar di 3+ Zona Waktu

Apr 18, 2026

Perencanaan Kapasitas Tanpa Spreadsheet yang Membingungkan

Apr 18, 2026

Percakapan tentang Norma Tim yang Selama Ini Anda Hindari

Apr 18, 2026

Onboarding Anggota Tim Baru ke Cara Kerja Anda

Apr 18, 2026

Audit Rapat: Cara Menghapus Rapat yang Tidak Disukai Tim Anda

Apr 1, 2026

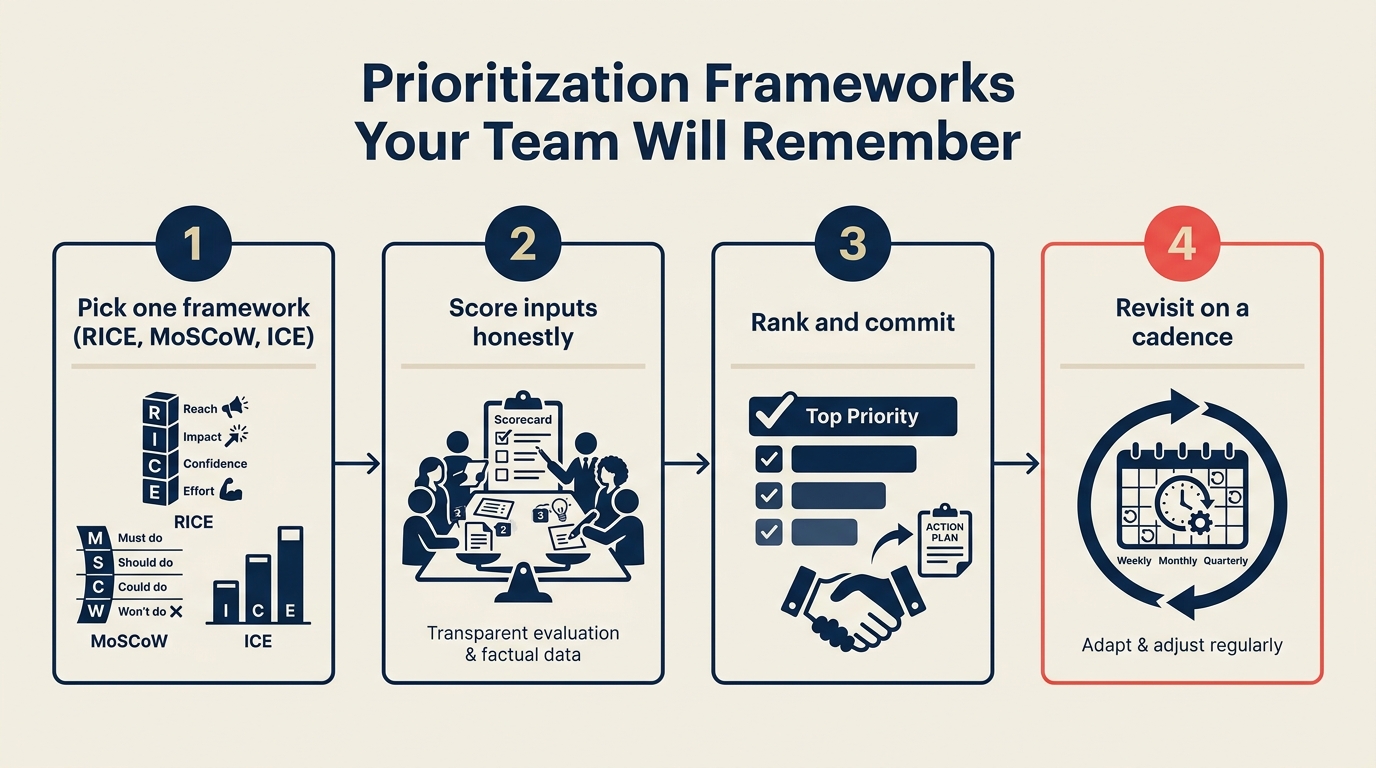

Kerangka Prioritisasi yang Akan Diingat Tim Anda

Sebagian besar tim pernah melalui latihan kerangka prioritisasi di beberapa titik. Seseorang mempresentasikan matriks 2x2. Orang lain memperkenalkan penilaian RICE. Manajer produk menjalankan workshop. Semua orang mengangguk. Lalu Sprint berikutnya dimulai dan keputusan prioritisasi kembali didorong oleh siapa yang paling keras bicara dalam rapat perencanaan.

Masalahnya bukan kerangka itu tidak berhasil. Masalahnya adalah tim memperlakukan prioritisasi sebagai acara perencanaan kuartalan daripada kebiasaan operasional yang berkelanjutan. Saat siklus perencanaan berikutnya tiba, kerangkanya sudah terlupakan dan setiap pertanyaan "apa yang harus saya kerjakan berikutnya?" dimulai dari nol.

Kerangka yang biasa-biasa saja yang diterapkan secara konsisten lebih berharga daripada kerangka sempurna yang diterapkan sekali. Tujuannya bukan menemukan model penilaian yang ideal. Tujuannya adalah memberi tim kosakata bersama dan proses keputusan default yang dapat mereka gunakan tanpa rapat. Dokumentasikan kosakata itu dalam perjanjian operasional tim sehingga anggota baru mewarisi kerangkanya daripada harus menemukannya kembali.

Mengapa Prioritisasi Gagal

Sebelum memilih kerangka, ada baiknya memahami mode kegagalan mana yang sebenarnya Anda coba perbaiki. Tim kehilangan disiplin prioritisasi dengan alasan yang berbeda.

Segalanya mendesak. Ketika pemangku kepentingan memberi label setiap permintaan sebagai prioritas tinggi, tim tidak memiliki sinyal tentang apa yang benar-benar penting. Permintaan prioritas tinggi menjadi tidak berarti, dan siapa pun yang paling banyak membuat keributan mendapatkan pekerjaannya selesai lebih dulu. Ini adalah masalah budaya sebanyak masalah prioritisasi, tetapi kerangka penilaian bersama menciptakan lapisan objektivitas yang membuatnya lebih sulit untuk dimanipulasi.

Kerangkanya terlalu rumit untuk digunakan saat ini. Model penilaian berbobot 8 faktor mungkin menghasilkan keputusan prioritisasi yang sangat baik secara teori. Tetapi tidak ada yang membukanya ketika mereka memiliki tiga permintaan bersaing pada Selasa siang. Jika kerangkanya memerlukan spreadsheet dan 30 menit untuk dioperasikan, kerangka itu tidak akan digunakan untuk keputusan sehari-hari.

Suara yang dibayar paling tinggi menang. HiPPO, Highest Paid Person's Opinion, adalah mekanisme prioritisasi default di sebagian besar organisasi ketika tidak ada kerangka bersama. Ini cepat, tegas, dan menghasilkan hasil yang buruk dari waktu ke waktu karena mengabaikan bukti dan melewati orang yang paling dekat dengan pekerjaan. Riset MIT Sloan Management Review tentang kualitas keputusan menemukan bahwa keputusan yang dibuat tanpa kerangka terstruktur dibalik atau direvisi lebih dari dua kali lipat dari keputusan yang dibuat dengan kriteria eksplisit, biaya tersembunyi yang signifikan bagi organisasi.

Tidak ada yang menyepakati kerangka mana yang akan digunakan kapan. Tim terkadang memiliki beberapa kerangka yang beredar dan menggunakannya secara bergantian. Menggunakan RICE untuk triage tugas harian menghasilkan keputusan yang terlalu kompleks. Menggunakan perasaan untuk investasi tingkat roadmap menghasilkan keputusan yang kurang diperiksa. Alat yang tepat tergantung pada jenis keputusan.

Tiga Kerangka, Tiga Kasus Penggunaan

Daripada meresepkan satu kerangka universal, berikan tim tiga, satu untuk setiap skala keputusan. Ini terdengar seperti lebih banyak kompleksitas, tetapi sebenarnya lebih sedikit. Ketika keputusannya jelas "triage tugas harian," semua orang tahu untuk menggunakan ICE. Ketika jelas "tradeoff tingkat roadmap," semua orang tahu untuk menggunakan RICE.

Kerangka 1: ICE untuk Keputusan Cepat Sehari-hari

ICE adalah singkatan dari Impact (Dampak), Confidence (Kepercayaan Diri), dan Ease (Kemudahan). Ini adalah kerangka yang tepat untuk: "Saya memiliki tiga tugas yang bisa saya kerjakan hari ini. Mana yang harus saya lakukan pertama?"

Impact: Seberapa banyak ini menggerakkan jarum pada prioritas kami saat ini? Skor 1-10.

Confidence: Seberapa yakin saya bahwa ini akan menghasilkan dampak yang diharapkan? Skor 1-10. (Ini adalah faktor yang paling sering dilewati, dan yang menangkap taruhan yang paling terlalu percaya diri.)

Ease: Seberapa mudah atau cepat ini dilaksanakan relatif terhadap alternatif? Skor 1-10. Ease tinggi = gesekan rendah = output lebih cepat.

Skor ICE = (Impact x Confidence x Ease) / 3

Tetapi Anda tidak benar-benar perlu melakukan perhitungan setiap saat. Nilai ICE ada pada struktur promptnya, bukan angkanya. Ketika Anda memutuskan antara tiga tugas, bertanya pada diri sendiri "mana yang memiliki dampak tertinggi, yang paling saya yakini, dan yang paling dapat dicapai sekarang?" akan membawa Anda ke jawaban yang tepat lebih cepat dari intuisi.

Di mana ICE paling berguna: pemilihan tugas tingkat Sprint, memutuskan mana dari beberapa perbaikan bug yang diprioritaskan, memilih antara dua permintaan fitur ketika sumber daya terbatas.

Di mana ICE gagal: keputusan yang melibatkan investasi signifikan (ICE tidak menangkap nilai jangka panjang dengan baik), keputusan di mana jangkauan lebih penting daripada kedalaman dampak.

Kerangka 2: RICE untuk Tradeoff Tingkat Roadmap

RICE adalah singkatan dari Reach (Jangkauan), Impact (Dampak), Confidence (Kepercayaan Diri), dan Effort (Upaya). Ini adalah kerangka yang tepat untuk: "Kami merencanakan Q3. Enam inisiatif ini semua bisa kami lakukan. Tiga mana yang harus kami komitmenkan?"

Reach: Berapa banyak pengguna, pelanggan, atau unit bisnis yang terpengaruh selama periode tertentu? Gunakan angka nyata: pengguna per kuartal, kesepakatan per bulan, apa pun unit pengukuran Anda.

Impact: Seberapa banyak ini menggerakkan metrik kunci per orang atau unit yang terpengaruh? Skor sebagai pengganda (0,25 = minimal, 0,5 = rendah, 1 = sedang, 2 = tinggi, 3 = masif).

Confidence: Seberapa yakin Anda dengan estimasi jangkauan dan dampak Anda? Skor sebagai persentase (100% = sangat yakin, 80% = cukup yakin, 50% = spekulatif).

Effort: Berapa bulan-orang yang akan dibutuhkan ini? Sertakan semua peran.

Skor RICE = (Reach x Impact x Confidence) / Effort

Skor yang dihasilkan memungkinkan Anda membandingkan proyek di berbagai jenis dan skala. Proyek dengan skor RICE 450 diprioritaskan di atas yang memiliki skor 280, bukan karena seseorang memutuskan demikian, tetapi karena matematikanya mencerminkan estimasi tim yang sebenarnya.

Manfaat terpenting RICE bukan angka akhirnya. Ini adalah percakapan yang dihasilkan oleh proses penilaian. Ketika Anda mengisi tabel dan menemukan bahwa proyek yang semua orang asumsikan sebagai prioritas tinggi memiliki skor RICE setengah dari proyek yang belum ada yang mengadvokasi, itu adalah konflik produktif untuk diungkap. Riset Forrester tentang prioritisasi produk menemukan bahwa tim produk yang menggunakan kerangka penilaian terstruktur mengirimkan fitur dengan tingkat adopsi yang terukur lebih tinggi, terutama karena proses penilaian memaksa penyelarasan tentang apa yang benar-benar dihargai pengguna versus apa yang disukai pemangku kepentingan internal.

Di mana RICE paling berguna: perencanaan kuartalan atau Sprint, keputusan roadmap, membandingkan inisiatif yang heterogen.

Di mana RICE gagal: keputusan taktis (terlalu berat), apa pun yang memerlukan penilaian real-time, situasi di mana jangkauan sangat sulit diestimasi.

Kerangka 3: Eisenhower Matrix untuk Triage Beban Kerja Individual

Eisenhower Matrix adalah kerangka yang tepat untuk masalah personal/manajerial yang spesifik: "Kotak masuk saya penuh dan daftar tugas saya melebihi kapasitas. Apa yang benar-benar memerlukan perhatian saya?"

Matriks memilah pekerjaan pada dua dimensi:

- Mendesak vs. tidak mendesak (tekanan waktu)

- Penting vs. tidak penting (keselarasan dengan tujuan strategis)

| Mendesak | Tidak Mendesak | |

|---|---|---|

| Penting | Lakukan sekarang | Jadwalkan |

| Tidak Penting | Delegasikan | Eliminasi |

Matriks paling berharga untuk mengekspos kuadran "mendesak tetapi tidak penting": pekerjaan reaktif yang menciptakan perasaan sibuk terus-menerus tanpa memajukan apa pun yang penting. Sebagian besar manajer menghabiskan terlalu banyak waktu di sini dan tidak cukup waktu di kuadran "penting tetapi tidak mendesak" di mana strategi, pengembangan tim, dan pekerjaan preventif berada. Kerangka ini didasarkan pada prinsip yang dikaitkan dengan Presiden Eisenhower dan dipopulerkan dalam karya Stephen Covey, ketahanannya mencerminkan kebenaran struktural nyata tentang bagaimana pekerjaan pengetahuan salah dialokasikan. Studi McKinsey tentang penggunaan waktu eksekutif menemukan bahwa pemimpin senior yang secara aktif mengaudit alokasi waktu mereka sendiri terhadap prioritas strategis secara konsisten mengungguli mereka yang tidak.

Aplikasi tingkat tim: di awal setiap minggu, setiap anggota tim mengkategorikan daftar mereka ke dalam empat kuadran. Bagikan kuadran dalam thread async singkat. Ketika seluruh tim dapat melihat bahwa tiga orang memiliki pekerjaan "mendesak tetapi tidak penting" yang bisa didelegasikan atau dieliminasi, itu adalah percakapan yang layak dilakukan.

Di mana Eisenhower Matrix paling berguna: perencanaan mingguan individual, triage beban kerja manajer, mengidentifikasi pekerjaan untuk didelegasikan atau dihentikan.

Di mana ia gagal: tidak membantu dengan "tugas mana yang penting-dan-mendesak yang didahulukan?", memerlukan penilaian diri yang jujur (matriks hanya berhasil jika Anda jujur tentang apa yang benar-benar penting).

Memilih Satu Kerangka per Kasus Penggunaan

Kesalahan prioritisasi terbesar yang dilakukan tim bukan menggunakan kerangka yang salah. Ini adalah berganti kerangka tergantung siapa yang ada di ruangan. Ketika pilihan kerangka bersifat situasional daripada struktural, kerangka menjadi alat untuk rasionalisasi pasca-hoc daripada dukungan keputusan yang tulus.

Pilih satu kerangka per jenis keputusan dan jadikan default eksplisit tim:

- ICE = keputusan tugas harian dan tingkat Sprint

- RICE = keputusan roadmap dan perencanaan kuartalan

- Eisenhower = manajemen beban kerja individual dan keputusan delegasi

Dokumentasikan ini dalam perjanjian operasional tim. Ketika seseorang datang ke rapat perencanaan Sprint dengan kerangka yang berbeda, responsnya adalah: "Kami menggunakan RICE untuk ini. Mari kita nilai dengan RICE dan lihat di mana posisinya." Itu bukan kaku. Ini konsisten. Konsistensi adalah yang membuat kerangka dapat dipercaya dari waktu ke waktu.

Peringkat Prioritas Mingguan 15 Menit

Salah satu ritual tim dengan ROI tertinggi yang dapat Anda tambahkan adalah peringkat prioritas singkat di awal setiap Sprint. Membutuhkan 15 menit dan mencegah kebingungan pertengahan Sprint dalam jumlah besar.

Formatnya:

- (5 menit) Setiap orang mencantumkan 3 item teratas mereka untuk Sprint dalam dokumen bersama, dengan catatan ICE singkat.

- (5 menit) Tim meninjau daftar bersama. Tandai item apa pun di mana prioritas bertentangan atau di mana 3 teratas seseorang bergantung pada pekerjaan orang lain yang diselesaikan terlebih dahulu.

- (5 menit) Sepakati 5 teratas tim untuk Sprint. Bukan 20 teratas. 5 teratas. Segalanya adalah di bawah garis.

Perbedaan "di bawah garis" itu penting. Tim yang tidak dapat mengatakan apa yang TIDAK mereka lakukan Sprint ini tidak memiliki prioritas nyata. Mereka hanya memiliki daftar panjang. Garis di bawah yang jelas menjaga Sprint tetap jujur. Ini juga membuat perencanaan kapasitas jauh lebih mudah: begitu Anda mengetahui 5 teratas, Anda memeriksa apakah jam nyata mendukung komitmen tersebut.

Output adalah daftar prioritas bersama yang dapat dirujuk semua orang ketika permintaan baru masuk. "Apakah ini di atas atau di bawah garis untuk Sprint ini?" adalah percakapan yang jauh lebih cepat daripada "haruskah kita melakukan ini?" tanpa kerangka referensi apa pun.

Lembar Penilaian ICE

Berikut format sederhana untuk penilaian ICE yang dapat diisi tim bersama dalam waktu kurang dari 10 menit:

| Item | Impact (1-10) | Confidence (1-10) | Ease (1-10) | Skor ICE |

|---|---|---|---|---|

| [Tugas/fitur A] | ||||

| [Tugas/fitur B] | ||||

| [Tugas/fitur C] |

Skor ICE = Rata-rata (Impact x Confidence x Ease)/3

Lakukan penilaian ini sebagai tim, bukan secara individual. Ketika skor berbeda secara signifikan (satu orang menilai dampak di 8 dan yang lain di 3), itu adalah tanda bahwa ada asumsi berbeda tentang apa yang seharusnya dicapai item ini. Ungkap itu sebelum memulai pekerjaan.

Template Kalkulator RICE

Untuk keputusan tingkat perencanaan, gunakan struktur ini:

| Inisiatif | Jangkauan (per kuartal) | Dampak (pengganda) | Keyakinan (%) | Upaya (bulan-orang) | Skor RICE |

|---|---|---|---|---|---|

| [Inisiatif A] | |||||

| [Inisiatif B] | |||||

| [Inisiatif C] |

Skor RICE = (Jangkauan x Dampak x Keyakinan%) / Upaya

Urutkan berdasarkan Skor RICE menurun. Tambahkan garis batas pada batas kapasitas Anda. Semua yang di atas garis dikomitmenkan; semua yang di bawah adalah Backlog.

Kesalahan Umum

Menilai berdasarkan perasaan tanpa kriteria bersama. Jika "dampak" berarti hal yang berbeda bagi penilai yang berbeda, skornya tidak sebanding. Tentukan arti setiap nilai skor sebelum menilai. Untuk RICE jangkauan, gunakan unit spesifik (pengguna per kuartal, kesepakatan per bulan). Untuk ICE dampak, tentukan seperti apa nilai 9 versus 5.

Mengganti kerangka setiap kuartal. Jika kerangka baru diperkenalkan di setiap siklus perencanaan, tim tidak pernah membangun memori otot yang membuat kerangka benar-benar berguna. Pilih default Anda dan pertahankan setidaknya dua kuartal penuh sebelum mengevaluasi apakah akan mengubahnya.

Membiarkan suara yang dibayar paling tinggi mengesampingkan skor. Jika skor RICE mengatakan inisiatif B adalah prioritas utama tetapi VP lebih menyukai inisiatif A, Anda memiliki dua pilihan: masukkan alasan VP ke dalam penilaian (mungkin mereka memiliki informasi yang seharusnya memperbarui estimasi Anda) atau terima bahwa keputusannya politis dan catat itu secara eksplisit. Yang tidak boleh Anda lakukan adalah berpura-pura skor mendukung preferensi VP ketika tidak. Itu menghancurkan kepercayaan pada kerangka sepenuhnya.

Menggunakan RICE untuk segalanya. RICE sangat baik untuk perencanaan roadmap dan sangat buruk untuk "haruskah saya membalas pesan Slack ini sekarang atau nanti." Cocokkan kerangka dengan skala keputusan.

Menghubungkan Prioritisasi ke Sistem Operasional Anda

Keputusan prioritisasi tidak terjadi dalam isolasi. Mereka menjadi masukan dan menerima masukan dari beberapa praktik tim lainnya.

Catatan keputusan Anda harus menangkap keputusan prioritisasi utama, terutama yang memotong lingkup atau menjatuhkan item di bawah garis. Ketika seseorang bertanya tiga bulan kemudian "mengapa kita tidak membangun X?", catatan keputusan adalah tempat jawabannya berada.

Keputusan lingkup proyek yang dibuat selama kickoff proyek menetapkan kerangka untuk prioritisasi dalam Sprint. Jika kickoff menetapkan kriteria keberhasilan yang jelas dan non-tujuan, prioritisasi Sprint sebagian besar adalah masalah menjaga pekerjaan tetap selaras dengan komitmen tersebut.

Dan pembaruan status mingguan Anda harus mencerminkan peringkat prioritas. "Kami mengirimkan X (yang merupakan prioritas utama) dan Y pindah ke Sprint berikutnya karena berada di bawah garis minggu ini" adalah sinyal status yang lebih jelas daripada daftar semua yang disentuh tim.

Prinsip Konsistensi

Inilah kebenaran jujur tentang kerangka prioritisasi: model paling canggih yang bisa dibayangkan tidak akan memperbaiki tim di mana suara paling keras selalu menang atau di mana setiap permintaan diberi label mendesak oleh orang yang membuatnya.

Kerangka membutuhkan dukungan organisasi untuk berhasil. Manajer perlu melindungi daftar prioritas dari permintaan ad-hoc. Pemangku kepentingan perlu memahami bahwa permintaan mereka akan dinilai dan diurutkan, tidak langsung dimasukkan ke Sprint. Kepemimpinan perlu memodelkan proses dengan menjalankan permintaan mereka sendiri melalui sistem penilaian daripada melewatinya.

Tetapi ketika konteks itu ada, atau ketika Anda membangunnya, konsistensi adalah yang mengubah kerangka dari artefak perencanaan menjadi kebiasaan operasional. Jalankan skor ICE di setiap rapat perencanaan Sprint, bahkan ketika terasa jelas. Bangun tabel RICE setiap kuartal, bahkan ketika satu inisiatif jelas mendominasi. Gunakan Eisenhower Matrix setiap Senin, bahkan ketika Anda merasa tahu prioritas Anda.

Nilainya bertambah. Setelah enam bulan penggunaan yang konsisten, tim Anda tidak akan membutuhkan kerangka untuk sebagian besar keputusan. Mereka akan menginternalisasi pertanyaan cukup baik untuk menjawabnya dengan cepat. Kerangka menjadi memori otot, bukan contekan. Riset Stanford Graduate School of Business tentang pembentukan kebiasaan dalam tim menunjukkan bahwa ritual keputusan bersama menjadi memperkuat diri sendiri setelah sekitar 8-10 pengulangan, alasan mengapa dua bulan pertama adopsi kerangka terasa berat sementara bulan ketiga dan keempat mulai terasa alami.

Pelajari Lebih Lanjut: Jelajahi Panduan Produktivitas Tim lengkap untuk panduan praktis lainnya tentang bagaimana tim berkinerja tinggi membuat keputusan dan tetap selaras. Bacaan terkait: percakapan norma tim yang selama ini Anda hindari, focus block di tingkat tim, dan kecepatan pengambilan keputusan sebagai keunggulan kompetitif.

Principal Product Marketing Strategist

On this page

- Mengapa Prioritisasi Gagal

- Tiga Kerangka, Tiga Kasus Penggunaan

- Kerangka 1: ICE untuk Keputusan Cepat Sehari-hari

- Kerangka 2: RICE untuk Tradeoff Tingkat Roadmap

- Kerangka 3: Eisenhower Matrix untuk Triage Beban Kerja Individual

- Memilih Satu Kerangka per Kasus Penggunaan

- Peringkat Prioritas Mingguan 15 Menit

- Lembar Penilaian ICE

- Template Kalkulator RICE

- Kesalahan Umum

- Menghubungkan Prioritisasi ke Sistem Operasional Anda

- Prinsip Konsistensi